Introduction

Computers and software are ubiquitous, yet only a small minority of people grasp how they function. Citizens in a democracy have certain responsibilities: to stay well-informed, to vote, to critically evaluate statements by those in power. As software continues to seep into all aspects of life, its impact on society grows proportionally. This situation demands of us a basic understanding of software’s essential features.

Code

When I’m talking about software, I’m talking about every single digital thing that you interact with. Your phone’s operating system, Android or iOS; applications like Microsoft Word; mobile apps like Snapchat; interactive websites; smart TVs; video games; electronic voting machines; car entertainment systems. Software is created by writing code.

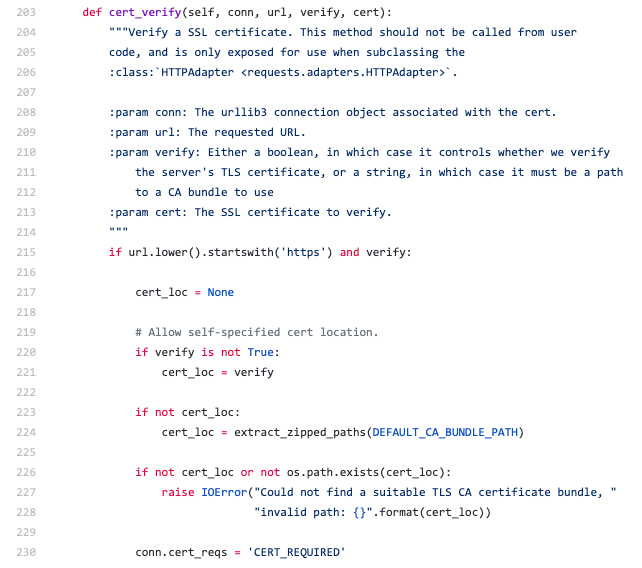

Code looks like this:

Code is just a text file, or a group of them, written according to certain rules. Often code will be used to generate an executable application that your computer then runs, like Chrome or Excel. Code is a set of instructions that tells a computer program what to do and how to respond to input like a mouse click, a screen tap, or the credit card number entered on a checkout form. Large software supports a lot of different actions, and it’s the software developer’s job to write down how those actions should be accomplished with code.

Software's Complexity

Understanding and working on large software projects is very difficult. Even a small snippet of code constitutes a rigorous set of logical statements, and understanding their behavior is an intrinsically difficult task. Big projects contain millions of lines of code; one cannot remember them all. Developers make a mental tradeoff to accomplish their work in such an overwhelming environment. It rests on the idea that a given snippet of code is meant to accomplish some task. Rather than memorizing the snippet’s code, they instead work with that higher-level task: “this code verifies the user input”, or “this code writes the data to a file”. This organization of the code into higher-level functional modules lets work proceed, but the detail is forgotten in the process. It’s similar to an argumentative essay organized into paragraphs, each arguing a specific claim. A shortcut for understanding the argument’s overall flow is to read the progression of claims made in each paragraph. You lose the detail, but you still get the argument.

Another difficult aspect of software is the expectation that things will not always proceed as planned. If you are writing the code for a website that asks for a person’s date of birth, and they input a date with the year 1765, what should the program do? What if they input their name instead of a date? Software developers are tasked with anticipating these scenarios and handling them gracefully. Often the complexity comes from the interaction between two sections of code. A developer may feel confident they’ve handled problematic inputs to their code. But what happens when the output of that vetted code is transmitted to an entirely different module of the system, a module authored by someone else entirely? Large codebases have many authors, and their contributions constantly interact with each other. Anticipating problematic interactions is a lot of work.

Some complexity stems from the fact that software always executes on a computer. A specific computer. But people use all sorts of computers! If you write a program, it may run on a Mac, Windows, or Linux computer, a smartphone, a tablet. And each of those categories contains its own universe of possible hardware configurations, plus the various combinations of user settings that device owners configure. A developer may have verified that their code works on their own computer, but what about all the others?

The notion I’m trying to put in your head is that software is really very complex. Its complexity balloons as you move from Programming 101 to large production systems. Comprehending such large systems in detail is rarely accomplished by a single person. Different people are experts in distinct modules that interlock like mechanical gears to accomplish the system’s overall work. An unsurprising result is that large systems will not work perfectly in all scenarios. There exist unanticipated situations, and situations that were anticipated but handled incorrectly by mistake, where the program doesn’t operate as intended. Such defects in software are known as bugs. They are unavoidable. In any piece of software there are bugs, likely many bugs. Developers don’t tinker with code forever, fixing all the bugs, and then release it. They launch it while it’s imperfect and keep it running with regular updates.

Bugs

Bugs are not all created equal. Some are trivial, and some are serious. A bug’s importance is based on its effects. If a bug can cause your user’s data to be deleted, or is stopping your users from using your software altogether, it’s serious. If a bug causes one percent of users to see the wrong color button when they perform a specific series of actions, it’s unimportant.

A bug’s impact depends on the software’s context. For example, consider a bug that causes a user’s data to be deleted forever. While this would be a serious bug on any team, its impact depends on what data is being deleted. If this bug is in a mobile game, and the data deleted is your game progress, this bug’s real world impact is minimal. If this bug is in your bank’s software, and the data deleted is your account information, this bug is very serious. Team resources are finite, and not all bugs are important enough to fix.

Software development teams become aware of bugs through various means. The team may find them in the course of building the software. Users report them. Some companies administer “bug bounty” programs wherein they pay members of the public for disclosing a bug. But the number and nature of all bugs in a given software product will always be unknown. As the software undergoes changes and updates, sometimes in order to fix a bug, new bugs may even be introduced.

When a team fixes a bug, users must update the software for the fix to take effect. The software industry has a spotty record accomplishing this. The endless notifications prompting users to update an application to the latest version can feel more like an annoyance to quickly dismiss rather than a valuable security fix. Thankfully the software that we access through web browsers gets updated by the development teams themselves. Still, there are many computers running outdated software, and many of those uninstalled updates contain bug fixes. Outdated software can be considered a bug; if the latest version of the software with the fix is “correct”, then an outdated version of the software is not operating correctly.

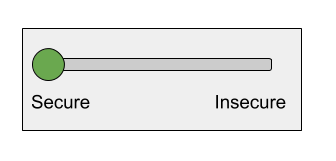

A final important class of bugs in software systems are configuration errors. Such errors are borne of negligence, convenience, or laziness. A developer’s work is often made easier if the system’s security is subpar. For example, one may leave enabled the default username and password “admin” and “admin” in some administrative software, or code something in an insecure way to make development easier with the intention of making it more secure before launching. For simplicity let’s assume the developer’s working computer is either secure or insecure.

Let’s say there’s a 95% chance that the developer working on this single machine remembers to make it secure before launching the project. You may feel pretty good about the security of this system with that probability. But large software systems are often composed of many different physical machines. With 1,000 machines and that same 95% probability per machine, it’s basically guaranteed one of them will accidentally be left insecure.

This is a simplified model; a machine’s security is not binary, it’s a continuum. If we assume each machine has multiple such secure / insecure toggles, the probability of some machine being left at least partially insecure is even higher.

Vulnerabilities

Bugs present opportunities for hackers wishing to maliciously access or modify computer systems. The collective ingenuity of the world’s hackers is impressive, and they use bugs, out of date software, and misconfigured systems like crowbars prying open back doors to slip through. Not every bug or configuration mistake is useful in this way, but some are, and we call such useful bugs vulnerabilities. The complexity of software guarantees vulnerabilities. Consider how a wealthy individual with skilled attorneys can find loopholes among byzantine tax laws. In a similar fashion, motivated hackers can poke around a given computer system and frequently find something to exploit.

What bad outcomes are possible when a hacker exploits a vulnerability? Computer systems are essentially tools for handling data. Thus in simple terms, a hacker can read data, modify data, delete data, or perhaps take down a specific service. How problematic each outcome can be depends on the system being intruded upon. Reading data is not a huge problem if they’ve accessed your Uber Eats orders. But what if the data read is a sensitive diplomatic cable, very intimate conversations, or your Social Security Number? The consequences of hackers modifying data is especially obvious if the system is an electronic voting machine. If Facebook is the service rendered inoperable, life will go on. But a government service or utility being taken offline may cause real harm.

The situation is not hopeless. Gaining access to a computer system does not guarantee a hacker anything. Once inside, they may face further obstacles. Well-defended systems are built in layers, each requiring unique methods for entry. The goal of computer security is not perfection, but increasing the cost of doing business for hackers. Best practices abound, and companies are getting more serious about data security every day.

But a healthy understanding of computers and software requires reckoning with the fact that any given computer system is vulnerable to intrusion by a motivated actor. Vulnerabilities in the form of bugs and configuration errors are sprinkled liberally throughout the software we all use. There is even a black market for vulnerabilities, with those unknown to the wider community (known as “0-days”) valued particularly highly. No matter how securely an Internet-connected system is designed and built, there is a way in.